Load Balance VMware Platform Services Controller (PSC) with NSX 6.2

Introduced with vSphere 6, the **Platform Services Controller (PSC) **provides several core services, such as Certificate Authority, License service and Single Sign-On (SSO).

Multiple external PSCs can be deployed serving one (or more) service, such as vCenter Server, Site Recovery Manager or vRealize Automation. When deploying the Platform Services Controller for multiple services, availability of the Platform Services Controller must be considered. In some cases, having more than one PSC deployed in a highly available architecture is recommended. When configured in high availability (HA) mode, the PSC instances replicate state information between each other, and the external products (vCenter Server for example) interact with the PSCs through a load balancer.

This post covers the configuration of an HA PSC deployment with the benefits of using NSX-v 6.2 load balancing feature.

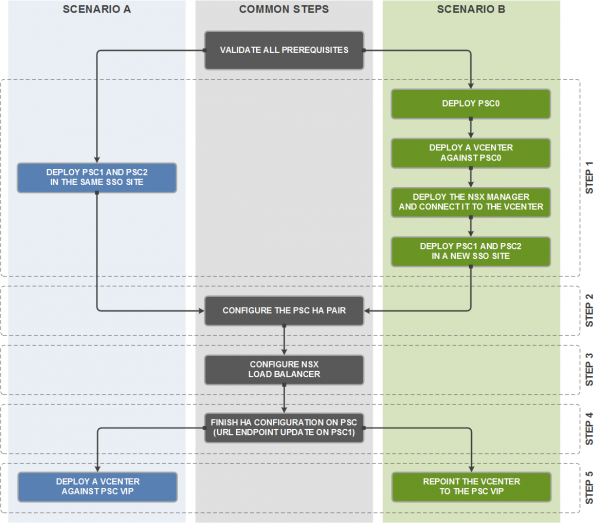

Due to the relationship between vCenter Server and NSX Manager, two different scenarios emerge:

-

**Scenario A **where both PSC nodes are deployed from an existing management vCenter. In this situation, the management vCenter is coupled with NSX which will configure the Edge load balancer. There are no dependencies between the vCenter Server(s) that will use the PSC in HA mode and NSX itself.

-

**Scenario B **where there is no existing vCenter infrastructure (and thus no existing NSX deployment) when the first PSC is deployed. This is a classic “chicken and egg” situation, as the NSX Manager that is actually responsible for load balancing the PSC in HA mode is also connected to the vCenter Server that use the PSC virtual IP.

While scenario A is straightforward, you need to respect a specific order for scenario B to prevent any loss of connection to the Web client during the procedure. The solution is to deploy a temporary PSC in a temporary SSO site to do the load balancer configuration, and to repoint the vCenter Server to the PSC virtual IP at the end. Both path are summarized in the workflow below.

I will cover only the first scenario in this post and a more complete article on the subject will be published tomorrow on the VMware Consulting Blog.

Environment

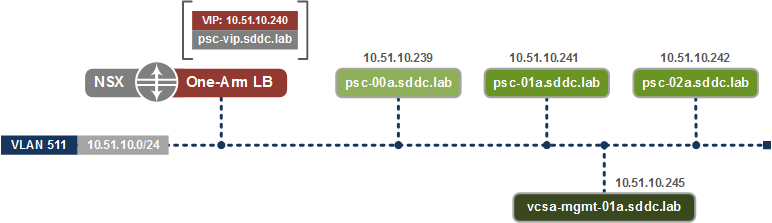

NSX Edge supports two deployment modes: one-arm mode and inline mode (also referred to as transparent mode). While inline mode is also possible, NSX load balancer will be deployed in a one-arm mode in our situation, as this model is more flexible and because we don’t require full visibility into the original client IP address.

Description of the environment:

- Software versions: VMware vCenter Server 6.0 U1 Appliance, ESXi 6.0 U1, NSX-v 6.2

- NSX Edge Services Gateway in one-arm mode

- Active/Passive configuration

- VLAN-backed portgroup (distributed portgroup on DVS)

- General PSC/vCenter and NSX prerequisites validated (NTP, DNS, resources, etc.)

To offer SSO in HA mode, two PSC servers have to be installed with NSX load balancing them in Active/Standby mode. PSC in Active/Active mode is currently not supported.

The following is a representation of the NSX-v and PSC logical design.

Procedure

You can take snapshots at regular intervals to be able to rollback in case of a problem.

Configure both PSCs as an HA pair

The following steps are based on KB 2113315, up to step D. I assume that two PSC nodes have just been deployed in the same SSO site.

-

Download the PSC high availability configuration scripts from the Download vSphere page and extract the content to /ha on PSC-01a and PSC-02a nodes. Note: refer to the KB 2107727 to enable the Bash shell in order to copy files in SCP into the appliance.

-

Run the following command on the first PSC node:

`python gen-lb-cert.py --primary-node --lb-fqdn=load_balanced_fqdn --password=<yourpassword>`

The load_balanced_fqdn parameter is the FQDN of the PSC Virtual IP of the load balancer. If you don’t specify the option –password option, the default password will be « _changeme _». In my situation, the command is:

python gen-lb-cert.py --primary-node --lb-fqdn=psc-vip.sddc.lab --password=brucewayneisbatman

- On the PSC-01a node, copy the content of the directory /etc/vmware-sso/keys to /ha/keys (a new directory that needs to be created).

- Copy the content of the /ha folder from the PSC-01a node to the /ha folder on the additional PSC-02a node (including the keys copied in the step before).

- Run the following command on the PSC-02a node:

python gen-lb-cert.py --secondary-node --lb-fqdn=load_balanced_fqdn --lb-cert-folder=/ha --sso-serversign-folder=/ha/keys

For my situation:

python gen-lb-cert.py --secondary-node --lb-fqdn=psc-vip.sddc.lab --lb-cert-folder=/ha --sso-serversign-folder=/ha/keys

Note: If you’re following the KB 2113315 don’t forget to stop the configuration here (end of section C in the KB).

NSX configuration

An NSX edge device must be deployed and configured for networking in the same subnet as the PSC nodes, with at least one interface for configuring the virtual IP. Before starting, you can enable the load balancer service (and eventually logging) under the Global Configuration menu of the load balancer tab. Following this, the configuration of NSX is made up of the following steps:

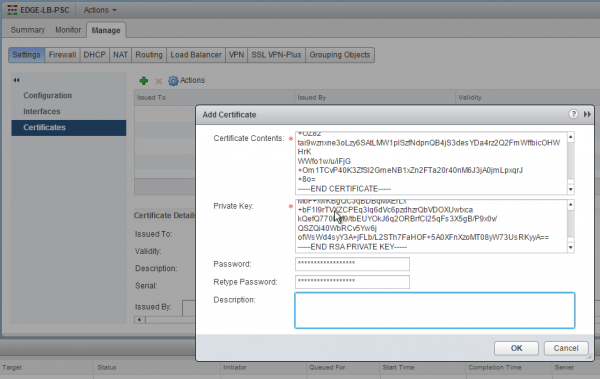

- Importing certificate

- Application profile configuration

- Pool configuration

- Application rules configuration

- Virtual servers configuration

The first step consists of importing the certificate generated by the python script on the first PSC node. Enter the configuration of the NSX edge services gateway on which to configure the load balancing service for the PSC, and add a new certificate in the Settings > Certificates menu (under the Manage tab). Use the content of the previously generated /ha/lb.crt file as the load balancer certificate and the content of the /ha/lb_rsa.key file as the private key.

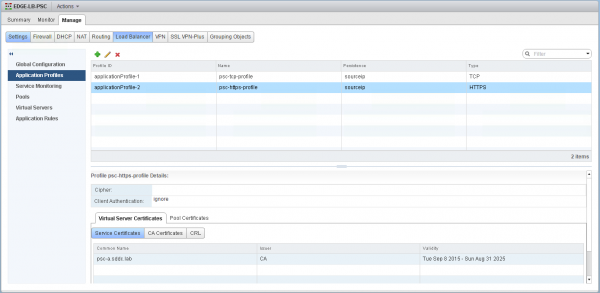

An application profile defines the behavior of a particular type of network traffic. Two application profiles have to be created: one for HTTPS protocol and one for other TCP protocols.

| Parameters | HTTPS Application Profile | TCP Application Profile |

|---|---|---|

| Name | psc-https-profile | psc-tcp-profile |

| Type | HTTPS | TCP |

| Enable Pool Side SSL | ||

| Configure Service Certificate | ||

Note: The other parameters shall be left with their default values.

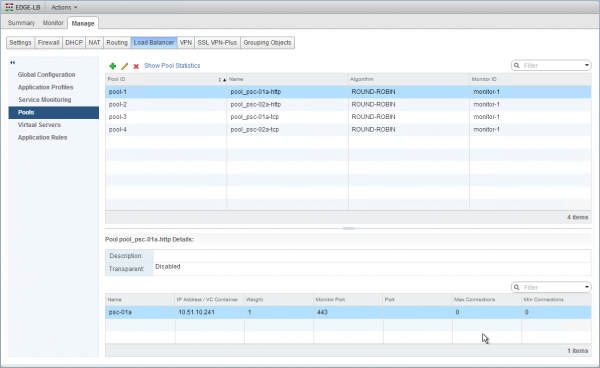

The NSX load balancer virtual server type HTTP/HTTPS provide web protocol sanity check for their backend pool. However, we do not want that sanity check their backend servers pool for the TCP virtual server. For that reason, different pools must be created for the PSC HTTPS virtual IP and TCP virtual IP.

In addition to that, due to the way SSO operates, it is not possible to configure it as active/active. The workaround for the NSX configuration is to configure two different pools (with one PSC instance per pool) for each virtual server and to use an application rule to switch between them in case of a failure. The application rule will send all traffic to the first pool as long as the pool is up, and will switch to the secondary pool if the first PSC is down.

The first pools will be used by the HTTPS virtual server for the HTTPS backend servers pools.

| Parameters | Pool 1 | Pool 2 |

|---|---|---|

| Name | pool_psc-01a-http | pool_psc-02a-http |

| Algorithm | ROUND-ROBIN | ROUND-ROBIN |

| Monitor | default_tcp_monitor | default_tcp_monitor |

| Members | psc-01a | psc-02a |

| Monitor Port | 443 | 443 |

And the additional pools will be used by the TCP virtual server for the TCP backend server pools.

| Parameters | Pool 3 | Pool 4 |

|---|---|---|

| Name | pool_psc-01a-tcp | pool_psc-02a-tcp |

| Algorithm | ROUND-ROBIN | ROUND-ROBIN |

| Monitor | default_tcp_monitor | default_tcp_monitor |

| Members | psc-01a | psc-02a |

| Monitor Port | 443 | 443 |

Note: while you could use a custom HTTPS healthcheck, I selected the default TCP Monitor in this example.

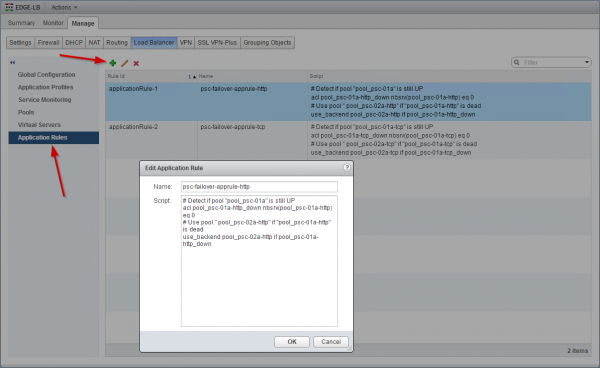

The next step consists of creating 2 application rules that will contain the logic which will perform the failover between pools (each containing one PSC) corresponding to the Active/Passive behavior of the PSC HA mode. The ACLs will check if the primary PSC is up; if the first pool is not up the rule will switch to the secondary pool.

The first application rule will be used by the HTTPS virtual server to switch between the corresponding pools for the HTTPS backend server pools.

# Detect if pool "pool_psc-01a-http" is still UP

acl pool_psc-01a-http_down nbsrv(pool_psc-01a-http) eq 0

# Use pool " pool_psc-02a-http " if "pool_psc-01a-http" is dead

use_backend pool_psc-02a-http if pool_psc-01a-http_down

And the second application rule will be used by the TCP virtual server.

# Detect if pool "pool_psc-01a-tcp" is still UP

acl pool_psc-01a-tcp_down nbsrv(pool_psc-01a-tcp) eq 0

# Use pool " pool_psc-02a-tcp " if "pool_psc-01a-tcp" is dead

use_backend pool_psc-02a-tcp if pool_psc-01a-tcp_down

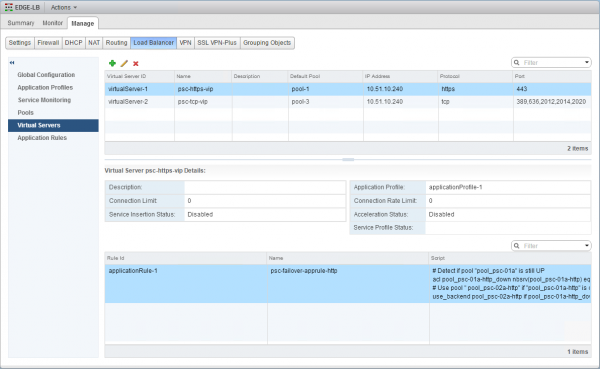

Finally, two virtual servers have to be created: one for HTTPS protocol and one for the other TCP protocols.

| Parameters | HTTPS Virtual Server | TCP Virtual Server |

|---|---|---|

| Application Profile | psc-https-profile | psc-tcp-profile |

| Name | psc-https-vip | psc-tcp-vip |

| IP Address | ||

| Protocol | HTTPS | TCP |

| Port | 443 | 389, 636, 2012, 2014, 2020 * |

| Default Pool | pool_psc-01a-http | pool_psc-01a-tcp |

| Application Rules | psc-failover-apprule-http | psc-failover-apprule-tcp |

* Although this procedure is for a fresh install, you could target the same architecture with SSO 5.5 being upgraded to PSC. If you plan to upgrade from SSO 5.5 HA, you must add the legacy SSO port 7444 to the list of ports in the TCP virtual server.

Now it’s time to finish the PSC HA configuration (step E of KB 2113315). Update the endpoint URLs on PSC with the load_balanced_fqdn by running this command on the first PSC node.

python lstoolHA.py --hostname=psc_1_fqdn --lb-fqdn=load_balanced_fqdn --lb-cert-folder=/ha --user=Administrator@vsphere.local

In my situation:

python lstoolHA.py --hostname=psc-01a.sddc.lab --lb-fqdn=psc-vip.sddc.lab --lb-cert-folder=/ha --user=Administrator@vsphere.local

And that’s it! :)

If your NSX Manager was configured with Lookup Service, you can now update it with the PSC virtual IP.

The complete procedure including the scenario B is published on the VMware Consulting Blog: Configuring NSX-v Load Balancer for use with vSphere Platform Services Controller (PSC) 6.0.

Resources: